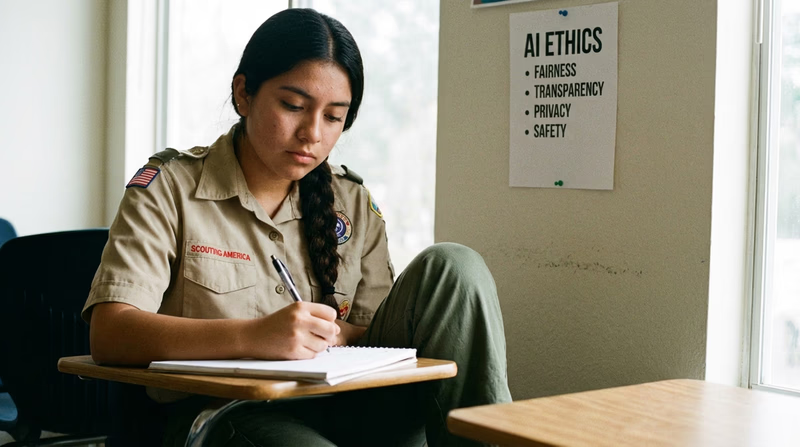

Req 4c — Your Ethical Guidelines

You have studied AI bias, privacy, and decision-making. You have wrestled with tough “What Would You Do?” scenarios. Now comes the creative part: writing your own set of rules for how AI should — and should not — be used. Think of this as your personal “AI Code of Ethics.”

Why Personal Guidelines Matter

Major organizations have already published their own AI ethics guidelines — companies like Google, Microsoft, and IBM, as well as institutions like the European Union and the United Nations. But this requirement asks you to develop your own guidelines. Why?

Because ethics is personal. You need to decide what YOU believe is right and wrong when it comes to this powerful technology. Your guidelines will reflect your values, your experiences, and your understanding of AI’s impact on the world.

How to Build Your Guidelines

Step 1: Choose Your Principles

Start by choosing 5–8 principles that you believe should govern the use of AI. Here are categories to consider, but you should phrase these in your own words:

- Fairness: AI should treat all people equally, regardless of race, gender, age, or background.

- Transparency: People should know when they are interacting with AI, and companies should be able to explain how their AI makes decisions.

- Privacy: AI should only collect and use personal data with clear permission, and people should be able to see and delete their data.

- Accountability: When AI causes harm, there should be a clear chain of responsibility — someone must be answerable.

- Safety: AI systems should be tested thoroughly before being used in situations where people could be harmed.

- Honesty: AI should not be used to deceive people (deepfakes, fake reviews, impersonation).

- Human oversight: AI should assist humans, not replace human judgment in critical decisions.

- Benefit: AI should be developed to benefit society as a whole, not just the people who build it.

Step 2: Write Each Guideline

For each principle, write:

- The guideline in one clear sentence

- A brief explanation of why it matters (2–3 sentences)

- A real-world example showing why you included it

Step 3: Consider Conflicts

Good ethics acknowledges that principles can sometimes conflict with each other. For example:

- Privacy might conflict with safety (sharing health data could save lives but violates privacy)

- Transparency might conflict with innovation (companies may not want to reveal their methods)

- Fairness might conflict with accuracy (adjusting AI to be more fair might reduce its accuracy)

Address at least one conflict in your guidelines and explain how you would handle it.

What Professional Guidelines Look Like

To give you inspiration, here are some principles from real-world AI ethics guidelines:

Google’s AI Principles (Summarized)

- Be socially beneficial

- Avoid creating or reinforcing unfair bias

- Be built and tested for safety

- Be accountable to people

- Incorporate privacy design principles

- Uphold high standards of scientific excellence

- Be made available for uses that accord with these principles

The EU AI Act (Summarized Core Ideas)

- AI systems must be transparent

- High-risk AI (healthcare, law enforcement, education) faces strict requirements

- AI that manipulates people or exploits vulnerabilities is banned

- People have the right to know when they are interacting with AI

Presenting Your Guidelines

When you share your guidelines with your counselor, here are ways to make your presentation strong:

Presentation Tips

How to present your AI ethics guidelines effectively

- Write them neatly: A typed or handwritten document with numbered guidelines looks professional.

- Use your own voice: These should sound like YOU, not like a corporate document.

- Be prepared to defend your choices: Your counselor may challenge a guideline to see how you think.

- Acknowledge uncertainty: It is perfectly fine to say “I’m not sure about this one because…”

- Connect to real examples: Reference specific situations you learned about in Requirements 4A and 4B.