Req 2a — History of Digital Technology

Your grandparents probably did not have a computer in their house growing up. Your parents might remember a time before smartphones. You have never known a world without the internet. Each generation has experienced a completely different relationship with digital technology — and understanding that arc of change helps you appreciate just how fast things are moving.

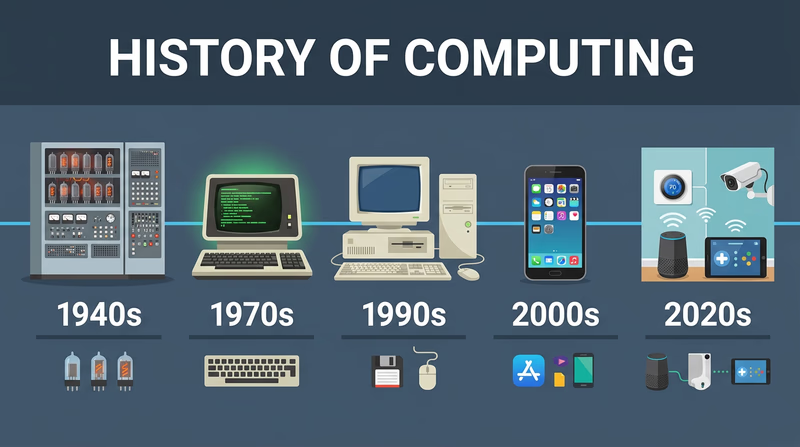

A Timeline of Digital Breakthroughs

The 1940s–1950s: The Dawn of Computing

The first electronic computers were built to solve military problems during and after World War II. ENIAC (1945) could calculate artillery firing tables. UNIVAC (1951) became the first commercially produced computer — and famously predicted the outcome of the 1952 presidential election on live television, stunning the nation.

These machines used vacuum tubes — glass tubes the size of light bulbs that acted as electronic switches. They generated enormous heat, consumed massive amounts of electricity, and broke down constantly. Programming meant physically rewiring cables or feeding in stacks of punched cards.

The 1960s–1970s: Shrinking the Computer

The invention of the transistor (1947) and then the integrated circuit (1958) replaced fragile vacuum tubes with tiny, reliable silicon chips. By the late 1960s, computers had shrunk from room-sized to refrigerator-sized.

In 1969, the U.S. Department of Defense connected four university computers together in a network called ARPANET — the earliest ancestor of the internet. That same year, NASA used computers with less memory than a modern calculator to guide Apollo 11 to the Moon.

The 1970s brought the microprocessor — an entire computer processor on a single chip. This breakthrough made personal computers possible.

The 1980s: Computers Come Home

The Apple II (1977), IBM PC (1981), and Macintosh (1984) brought computers into homes and schools. For the first time, ordinary families could own a computer. Early home computers used floppy disks that held about 1.44 megabytes — less than a single smartphone photo today.

Video games also exploded during this era. The Atari 2600, Nintendo Entertainment System, and arcade cabinets introduced millions of kids to digital technology for the first time.

The 1990s: The Internet Changes Everything

The World Wide Web went public in 1991, and by the late 1990s, millions of households had dial-up internet connections. The screeching sound of a modem connecting became the soundtrack of a generation. Email replaced letters. Search engines like Yahoo! and early Google made information searchable. Online shopping began with sites like Amazon (founded 1994).

The 2000s: Mobile Takes Over

Apple launched the iPhone in 2007, and smartphones quickly became the most important computing device in most people’s lives. Social media platforms — Facebook, YouTube, Twitter — changed how people communicated, shared news, and built communities. Wi-Fi became standard in homes, schools, and coffee shops.

The 2010s–Today: AI, Cloud & IoT

Cloud computing moved storage and processing power off your device and into massive data centers. Artificial intelligence went from science fiction to everyday reality — powering voice assistants, recommendation algorithms, language translation, and image generation. The Internet of Things connected billions of everyday objects to the network, from doorbells to refrigerators.

Comparing Generations

The best way to prepare for this requirement is to actually sit down and talk with a parent, grandparent, or other adult about their experience with technology. Here are some questions to guide that conversation:

Generational Interview Questions

Use these to guide your conversation

- What was the first computer you ever used? Where was it?

- Did you have a computer at home growing up? When did your family get one?

- How did you communicate with friends before texting and social media?

- What did you use to listen to music? (Records? Cassettes? CDs? MP3 players?)

- How did you do research for school projects before the internet?

- When did you get your first cell phone? What could it do?

- What technology change surprised you the most?

The Speed of Change

One of the most striking things about digital technology is how fast it evolves. Moore’s Law — an observation made by Intel co-founder Gordon Moore in 1965 — predicted that the number of transistors on a computer chip would double roughly every two years. This prediction held remarkably accurate for decades, driving exponential growth in computing power while costs dropped.

To put it in perspective:

| Year | Typical Storage | Cost per Gigabyte |

|---|---|---|

| 1980 | 10 MB hard drive | ~$100,000/GB |

| 1995 | 1 GB hard drive | ~$1,000/GB |

| 2005 | 100 GB hard drive | ~$1/GB |

| 2025 | 2 TB SSD | ~$0.05/GB |

The pattern is clear: technology gets dramatically more powerful and cheaper over time.

Computer History Museum — Timeline of Computer History An interactive timeline from the Computer History Museum covering key milestones in computing from the 1930s to today.

You now have a solid picture of where digital technology came from. Next, let’s look at where it might be going.